Artificial intelligence (AI) involves machines capable of computing, analysing, reasoning, learning, and uncovering meaning, among other tasks. It combines computer science and linguistics to create machines that can perform tasks requiring human intelligence. The rapid expansion of AI technologies brings both opportunities and challenges to Public Health.

AI has the potential to transform public health and healthcare by improving diagnostics, reducing medical errors, developing new treatments, assisting healthcare workers, testing health policies’ efficacy, and expanding access to care. For instance, ChatGPT, an AI language model developed by OpenAI, can assist individuals and communities in making informed decisions about their health by generating human-like prose based on extensive data.

READ I India’s pharma industry must rebuild its global reputation

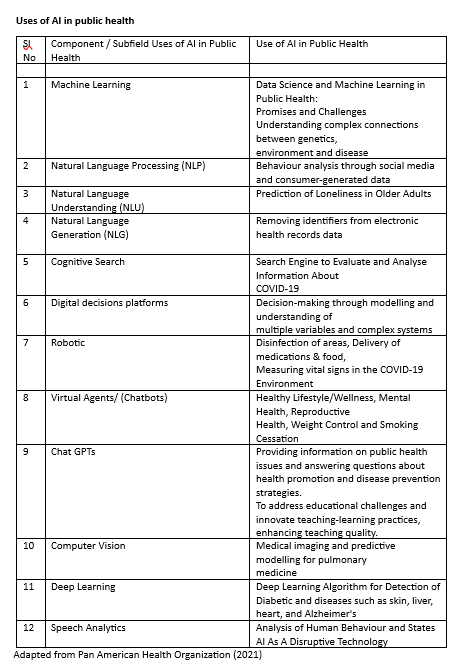

AI finds applications across all areas of public health, including health promotion, health surveillance, health protection, population health assessment, disease and injury prevention, and emergency prediction, preparedness, and response. AI-based medical devices, algorithms, and other emerging sectors show promise in monitoring, prevention, diagnosis, and health management, supporting public health efforts.

However, the impact and implications of AI on public health warrant attention. While AI offers potential benefits, there are also concerns about its adverse effects on health. Discussions often focus on misapplication in clinical settings, but researchers highlight the potential harms stemming from AI’s impact on social determinants of health, manipulation of individuals, use of lethal autonomous weapons, effects on work and employment, and threats posed by self-improving artificial general intelligence.

Moreover, the gathering, organisation, and analysis of personal information by AI, along with expanded surveillance systems, raise concerns about privacy and the potential for misleading marketing and information campaigns. The development of Lethal Autonomous Weapon Systems (LAWS) brings forth another set of threats, as they can engage human targets without human intervention, increasing risks to health and well-being.

Revolutionising public health

While AI holds immense promise in revolutionising public health, it is crucial to consider and address the potential risks and challenges associated with its deployment to ensure its responsible and ethical use for the betterment of public health and well-being.

The third set of dangers stems from the job losses resulting from widespread adoption of AI technology. It is projected that tens to hundreds of millions of jobs will be lost in the next decade due to AI-driven automation. The pace of technological advancement, including robots, artificial intelligence, and other technologies, as well as societal and governmental policies, will play significant roles. However, scholars once anticipated complete automation of human labour shortly after the year 2000.

In this decade, AI-driven automation is expected to have a disproportionate negative impact on low- and middle-income countries, primarily affecting lower-skilled employment. As automation progresses up the skill ladder, it will gradually replace larger portions of the global workforce, including those in high-income nations.

AI is being used in various ways in Public Health (see Table 1). However, society’s ability to manage the transition from manual labour to an AI-led labour market will be a crucial determinant of health. Unemployment is strongly associated with adverse health outcomes and behaviours, such as harmful alcohol and illicit drug use, obesity, lower self-rated quality of life and health, higher levels of depression, and an increased risk of suicide, despite the many benefits of moving away from repetitive, dangerous, and unpleasant work.

An optimistic scenario emerges from the potential benefits of AI, envisioning a world where increased productivity lifts everyone out of poverty and eliminates the need for labour-intensive jobs, predominantly replaced by AI-enhanced technology. However, there is no guarantee that the productivity gains from AI will be equitably shared among all members of society. Historically, rising automation has tended to redistribute income and wealth from workers to capital owners, contributing to growing wealth inequality worldwide.

A general-purpose self-improving AI, known as AGI, has the theoretical capability to learn and perform a wide range of human tasks. It can potentially overcome limitations in its code and begin developing its purposes by learning from and recursively improving its code. Alternatively, humans could give it this capability from the beginning. Unfortunately, the public health community lacks tools and concepts to fully understand the implications of AGI on human health and well-being, as well as ‘One Health.’

To avoid harm, effective regulation of AI development and use is necessary. The window of opportunity to prevent catastrophic consequences is closing due to the exponential rise of AI research and development. Future advancements in artificial intelligence, including artificial general intelligence, will depend on current policy choices and the effectiveness of regulatory institutions in mitigating risks, minimising harm, and maximizing benefits.

Michelle Bachelet, former head of the UN Office of the High Commissioner for Human Rights, urged all nations to halt the sale and use of AI systems until sufficient protections are in place to prevent the “negative, even catastrophic” risks they pose. Additionally, UNESCO proposed an agreement to guide the development of an appropriate legal framework to ensure the ethical growth of AI.

The European Union Artificial Intelligence Act (2021) identifies three categories of risk for AI systems: unacceptable, high, limited, and minimum. Although it falls short of safeguarding several fundamental human rights and prohibiting AI from exacerbating existing disparities and discrimination, this Act can serve as an initial step towards a global convention.

The World Health Organisation issued its first global report on AI in health, along with six guiding principles for the design and use of AI. The report establishes six consensus principles to ensure AI works for the public benefit of all nations and addresses the ethical issues and risks associated with its application in health. It also provides a list of recommendations to ensure that the governance of AI for health fully realizes the technology’s potential, holds all stakeholders accountable, and remains responsive to the communities and individuals affected by its use, as well as the healthcare workers who rely on these technologies.

The WHO has established six principles to ensure that AI works in the public interest across all countries:

- Protecting human autonomy: Humans should retain control over healthcare systems and medical decisions. Privacy and confidentiality should be safeguarded, and patients must provide valid informed consent through appropriate legal frameworks for data protection.

- Promoting human well-being, safety, and the public interest: The designers of AI technologies should comply with regulatory requirements for safety, accuracy, and efficacy for well-defined use cases or indications.

- Ensuring transparency and intelligibility: Sufficient information should be published or documented before the design or deployment of an AI technology. This information must be easily accessible and facilitate meaningful public consultation and debate on the technology’s design and its appropriate usage.

- Fostering responsibility and accountability: Stakeholders must ensure that AI is used under appropriate conditions and by adequately trained individuals. Furthermore, effective mechanisms should be in place for questioning and providing redress to individuals and groups adversely affected by decisions based on algorithms.

- Ensuring inclusiveness and equity: AI for health should encourage the broadest possible equitable use and access, regardless of age, sex, gender, income, race, ethnicity, sexual orientation, ability, or other protected characteristics under human rights codes.

- Promoting responsive and sustainable artificial intelligence: The designers, developers, and users of AI should continuously, systematically, and transparently assess an AI technology to determine whether it adequately responds and aligns with communicated expectations and requirements within the context of its use. AI systems should aim to minimize adverse environmental effects and maximize energy efficiency. Additionally, governments and businesses should handle predicted workplace disruptions, such as employment losses due to automated systems, and ensure healthcare professionals are trained to use AI systems.

When applying AI solutions in public health, bioethical concepts such as beneficence, non-maleficence, autonomy, and justice must be considered, along with human rights principles, including dignity, respect for life, freedom, self-determination, equity, justice, and privacy.

The most significant challenge for the public health community is to raise awareness about the dangers and threats posed by artificial intelligence. Serious considerations are necessary to prevent unregulated development and mitigate the adverse and potentially catastrophic effects of AI-enhanced technologies. Additionally, public health expertise should be utilized to advocate for a fundamental and substantial re-evaluation of social and economic policies based on evidence-informed arguments.

It is crucial to monitor organizations that rush the development of AI and those seeking to exploit it for selfish profit. This includes ensuring transparency and accountability in the military-corporate industrial complex, which is driving AI breakthroughs, as well as social media corporations that allow AI-driven targeted misinformation to undermine democratic institutions and violate privacy rights.

The United Nations is actively working to ensure that global social, political, and legal institutions keep pace with the rapid technological advances in AI. For instance, the UN established the High-Level Panel on Digital Cooperation to facilitate international discussions and cooperative strategies for a secure and inclusive digital future. However, the UN still lacks a legally binding mechanism to regulate AI and ensure global responsibility. Until effective regulation is in place, it is advisable to implement a moratorium on the development of self-improving artificial general intelligence.

Dr Joe Thomas is Global Public Health Chair at Sustainable Policy Solutions Foundation, a policy think tank based in New Delhi. He is also Professor of Public Health at Institute of Health and Management, Victoria, Australia. Opinions expressed in this article are personal.